Tree models are among the most widely used machine learning methods in modern business systems.

From fraud detection and churn prediction to logistics risk scoring and pricing optimization, tree-based models power decisions across industries.

But outside technical teams, there’s often confusion:

- Why are tree models so popular?

- When do they truly outperform simpler models?

- When are they unnecessary complexity?

- What makes them powerful in business environments?

This article breaks down tree models not from a theoretical lens — but from a business systems perspective.

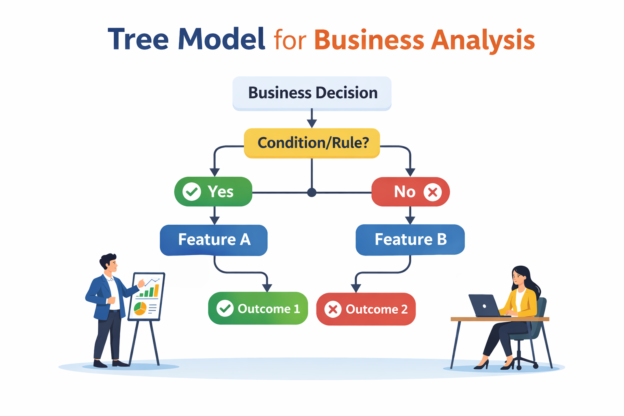

1. What a Tree Model Actually Does (Without the Textbook)

At its core, a tree model divides the world into segments.

It repeatedly asks:

- Is this value above or below a threshold?

- Does this condition hold?

- Is this category present?

Each split narrows the population into smaller groups with increasingly similar outcomes.

Instead of fitting a single global equation (like logistic regression), a tree model builds localized decision regions.

That distinction matters in business contexts.

Because business behavior is rarely smooth.

It is often:

- Threshold-based

- Interaction-driven

- Segment-specific

- Nonlinear

- Operationally conditional

Tree models are built to handle that reality.

2. Types of Tree Models Used in Business Systems

Understanding the differences helps clarify when to use each.

2.1 Single Decision Trees

- Fully interpretable

- Simple structure

- Easy to explain to stakeholders

- Often unstable alone

Business use:

- Policy simulation

- Rule extraction

- High-interpretability requirements

Limitations:

- Sensitive to small data changes

- Often underperform on complex datasets

2.2 Random Forests

- Many trees trained independently

- Predictions averaged

- More stable than a single tree

Business use:

- Moderate complexity problems

- When tuning resources are limited

- When variance reduction is needed

Trade-off:

- Less interpretable than a single tree

- Harder to reason about globally

2.3 Gradient Boosting (XGBoost, LightGBM, CatBoost)

- Trees built sequentially

- Each tree corrects previous errors

- Often best-performing on structured/tabular data

Business use:

- Fraud detection

- Risk scoring

- Conversion modeling

- Demand forecasting (tabular)

Trade-off:

- Requires tuning

- Needs monitoring

- Can overfit if unmanaged

In most enterprise environments, gradient boosting is the dominant tree-based approach.

3. Why Tree Models Perform Well in Business Data

The strength of tree models is not magic.

It comes from how business data behaves.

3.1 Business Systems Are Full of Threshold Effects

Examples:

- Fraud risk spikes sharply after transaction amount crosses a limit.

- Shipping delay probability jumps after route distance exceeds a threshold.

- Conversion drops rapidly when price increases beyond a certain point.

Linear models smooth these effects.

Tree models split directly at the threshold.

This makes them naturally suited to operational decision boundaries.

3.2 Feature Interactions Are Often Unknown

Consider:

- High-value transaction × new account × foreign IP

- Heavy shipment × long distance × specific carrier

- High churn risk × recent downgrade × low engagement

You could manually engineer interaction terms.

But in real systems, interactions are numerous and unpredictable.

Tree models detect these automatically.

This is one of their strongest advantages in applied settings.

3.3 Tabular Enterprise Data Is Structured

Tree models excel with:

- Numeric features

- Categorical variables

- Flags

- Aggregated behavior windows

- Sparse business indicators

They do not require feature scaling.

They handle heterogeneous data cleanly.

That makes them ideal for most enterprise analytics datasets.

4. Real Business Use Cases Where Tree Models Create Value

Let’s move from theory to real application.

4.1 Fraud Detection

Characteristics:

- Highly nonlinear

- Strong interactions

- Rapidly evolving patterns

- Imbalanced classes

Tree-based models:

- Capture complex interaction effects

- Detect new risk combinations

- Provide robust ranking signals

Why they often win:

Fraud patterns rarely follow simple linear relationships.

But:

Operational reality:

- Requires monitoring

- Needs drift detection

- Must be retrained frequently

Tree models are powerful here — but operationally demanding.

4.2 Customer Churn Prediction

Characteristics:

- Gradual behavioral shifts

- Segment-specific patterns

- Usage decay dynamics

Tree models:

- Capture nonlinear churn triggers

- Detect interaction between engagement signals

However:

If features already capture trend, recency, and frequency cleanly, logistic regression may perform similarly.

The difference often depends on feature maturity.

4.3 Logistics and Delivery Risk

Characteristics:

- Operational constraints

- Carrier variability

- Route complexity

- Threshold risk escalation

Tree models:

- Model route-specific splits

- Capture carrier-route interactions

- Handle heavy/large shipments differently

In supply chain systems, nonlinear risk spikes are common.

Tree models frequently outperform simpler approaches here.

4.4 Pricing and Elasticity Modeling (Structured Data)

Tree models:

- Capture nonlinear price sensitivity

- Detect segment-specific elasticity

- Handle interaction between user segment and price level

But caution:

If you require interpretable elasticity curves, linear models may still be preferable.

5. Where Tree Models Fail to Add Meaningful Value

Tree models are powerful — but not universally superior.

5.1 When Interpretability Is Mandatory

In:

- Credit approval

- Insurance underwriting

- Healthcare risk scoring

- Regulatory environments

You may need:

- Clear directional explanation

- Stable coefficient behavior

- Simple decision justification

Tree models can be explained — but not as cleanly as linear models.

Sometimes that trade-off matters more than performance.

5.2 When Feature Engineering Is Already Strong

If you have:

- Time-windowed aggregates

- Normalized rates

- Interaction features

- Trend and slope metrics

You may already have encoded nonlinear structure.

In those cases, the incremental gain from tree models may be small.

The bottleneck becomes data quality — not algorithm sophistication.

5.3 When Infrastructure and Monitoring Are Weak

Tree models require:

- Hyperparameter tuning

- Performance tracking

- Drift monitoring

- Retraining schedules

- Feature consistency

Without mature ML infrastructure, they can degrade silently.

In smaller teams, simpler models may be safer and more sustainable.

6. Operational Trade-Offs: The Hidden Cost of Tree Models

Most model comparison discussions ignore system costs.

Key considerations:

| Factor | Logistic Regression | Tree Models |

|---|---|---|

| Interpretability | High | Moderate |

| Nonlinear capture | Limited | Strong |

| Interaction detection | Manual | Automatic |

| Tuning complexity | Low | Medium–High |

| Monitoring needs | Moderate | High |

| Infrastructure burden | Low | Medium–High |

The decision is rarely just about ROC-AUC.

It’s about system fit.

7. A Business-First Decision Framework

Instead of asking:

“Are tree models better?”

Ask:

- Are nonlinear effects likely strong?

- Are interactions numerous and unknown?

- Is predictive accuracy materially valuable?

- Can we support operational complexity?

- Is interpretability a constraint?

If most answers are yes → tree models are strong candidates.

If not → simpler models may suffice.

8. The Strategic Insight

Tree models are not superior because they are newer.

They are superior when:

- The problem structure is nonlinear.

- Interaction effects drive outcome.

- Data is rich and structured.

- Infrastructure supports lifecycle management.

They are unnecessary when:

- Simplicity is enough.

- Interpretability dominates.

- Business lift is marginal.

- System constraints limit complexity.

The maturity lies not in knowing how to train XGBoost — but in knowing when it’s justified.

Final Thoughts

Tree models are foundational tools in applied machine learning for business use cases.

They excel in structured, interaction-heavy, nonlinear environments.

But like any tool, they create value only when aligned with the system around them.

The best business data science teams don’t default to tree models.

They evaluate trade-offs — performance, interpretability, infrastructure, and impact.

That’s what separates algorithm choice from applied judgment.

Discover more from Daily BI Talks

Subscribe to get the latest posts sent to your email.