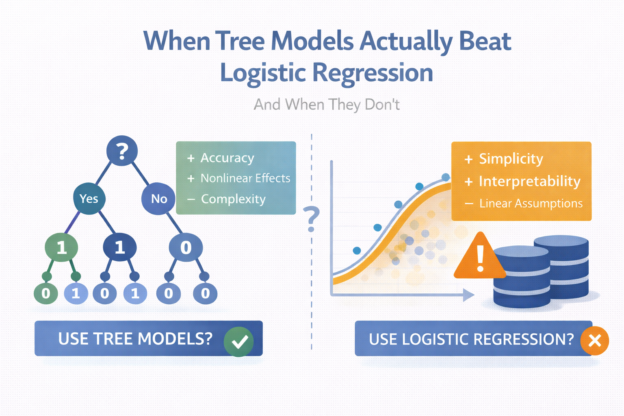

If you work in applied data science long enough, you’ll eventually hear some version of this question:

“Should we switch from logistic regression to a tree-based model?”

Sometimes the answer is yes.

Very often, the answer is not really.

Despite how popular tree models like decision trees, random forests, and gradient boosting have become, they don’t automatically outperform logistic regression in real business settings. The difference comes down to when you use them, why you use them, and what problem you’re actually solving.

This article walks through when tree models truly add value, when they don’t, and how experienced teams make that call in practice.

Logistic Regression vs Tree Models: The Real Difference

At a high level, the distinction is often described like this:

- Logistic regression assumes a mostly linear relationship between features and outcome.

- Tree models can capture nonlinearities and complex interactions automatically.

That description is technically correct—but incomplete.

In practice, the difference is less about math and more about:

- Data quality

- Feature design

- Business constraints

- Operational complexity

Understanding those factors matters far more than knowing which model is “more powerful.”

When Tree Models Actually Beat Logistic Regression

Let’s start with the cases where tree models genuinely earn their keep.

1. When Feature Interactions Are Real—and Hard to Engineer

Context

You’re working on a problem where outcomes depend on combinations of features rather than individual signals.

Examples:

- Fraud risk depends on amount × merchant × time of day

- Delivery failure depends on carrier × route × weather

- Conversion depends on device × geography × traffic source

Why logistic regression struggles

You can model interactions manually, but:

- You need to know which ones matter

- The number of combinations grows quickly

- Feature space becomes hard to manage

Why tree models win here

Tree-based models naturally split on combinations of features and uncover interactions you didn’t explicitly design.

In these cases, tree models often deliver a meaningful performance lift, especially early on.

2. When Relationships Are Strongly Nonlinear

Some patterns simply aren’t close to linear.

Examples:

- Risk spikes only after a threshold

- User behavior changes abruptly after a certain count

- Effects saturate quickly and then flatten

Tree models handle these patterns well because:

- They split data into regions

- They don’t assume monotonic effects

- They adapt locally instead of globally

If your exploratory analysis shows sharp bends or step-like behavior, tree models are often a better fit.

3. When You Need Strong Performance Fast (and Accept Complexity)

In some situations, performance matters more than interpretability.

Common examples:

- Internal ranking systems

- Pre-filtering pipelines

- Recommendation candidates

- Low-risk automation layers

Tree models—especially gradient boosting—often deliver:

- Strong baseline performance

- Minimal manual feature interaction work

- Fast iteration in early stages

If the model is not directly exposed to users or regulators, this trade-off can make sense.

When Tree Models Don’t Actually Beat Logistic Regression

Now for the less talked-about part.

Many teams move to tree models expecting major gains—and don’t get them.

Here’s why.

1. When Features Are Already Well-Engineered

In many mature systems, the heavy lifting is already done upstream.

If your feature set includes:

- Time-windowed aggregates

- Normalized rates

- Trend and change features

- Carefully defined flags

Then logistic regression often performs surprisingly close to tree models.

At that point, the model choice matters less than people expect.

The data representation is doing most of the work.

2. When Interpretability Is a First-Class Requirement

Tree models are explainable, but not simple.

In high-stakes environments—finance, risk, operations—stakeholders often need answers like:

- Why did this case cross the threshold?

- What changed compared to last week?

- Can we justify this decision externally?

Logistic regression provides:

- Clear directionality

- Stable coefficients

- Predictable behavior under change

Even when tree models slightly outperform, teams often stick with logistic regression because trust and explainability matter more than marginal gains.

3. When Data Is Noisy or Drifting

Tree models are flexible—which is both a strength and a weakness.

In environments with:

- Frequent schema changes

- Shifting user behavior

- Inconsistent data quality

Tree models can:

- Overfit subtle noise

- React strongly to small distribution shifts

- Become harder to monitor and debug

Logistic regression tends to be more robust and forgiving under imperfect conditions.

4. When Operational Simplicity Matters

Production systems are not Kaggle competitions.

Tree models usually require:

- More tuning

- More monitoring

- More careful retraining

- More infrastructure support

If the business impact of a better model is small, the added complexity may not be worth it.

This is one reason many teams experiment with tree models—but deploy logistic regression.

A Practical Decision Framework

Experienced teams rarely ask:

“Which model is better?”

They ask:

“What problem are we solving, and what constraints matter?”

A practical way to think about it:

Tree models are a good choice when:

- Interactions are complex and unknown

- Nonlinear effects are strong

- Interpretability is secondary

- You can support the operational cost

Logistic regression is often better when:

- Features are already strong

- Stability and trust matter

- Probabilities feed into decisions

- The system must be easy to maintain

Why Many Teams Use Both

In practice, the most common setup looks like this:

- Start with logistic regression as a baseline

- Learn the data and feature behavior

- Try tree models to test performance ceilings

- Deploy based on total system impact, not just metrics

Sometimes the tree model wins.

Often, it doesn’t—at least not enough to justify the trade-offs.

Final Thoughts

Tree models are powerful tools.

Logistic regression is a reliable one.

The mistake isn’t choosing one over the other—it’s assuming the choice is purely technical.

In applied data science, the “best” model is the one that fits the data, the business, and the system around it.

Knowing when tree models actually beat logistic regression—and when they don’t—is a sign you’re solving the right problem, not just using the latest technique.

Discover more from Daily BI Talks

Subscribe to get the latest posts sent to your email.